Designing for the Human Side of AI

- Kylee Ingram

- Mar 30

- 12 min read

The question shaping our technological future is not what AI can do — it is what we want it to do to us, for us, and with us.

The Question We Keep Avoiding

There is a question embedded in almost every conversation about artificial intelligence that rarely gets asked directly. Not whether AI is powerful — that is settled. Not whether it will change the shape of work, education, relationships, and public life — that is also settled. The question is simpler and more uncomfortable than either of those.

What kind of future are we designing, and for whom?

Tristan Harris, the former Google design ethicist who spent years inside the attention economy before becoming its most articulate critic, framed an earlier version of this problem with precision. His argument was that the dominant technology platforms of the past two decades were not built to serve their users. They were built to capture attention — and the most reliable way to capture attention turns out to be the exploitation of psychological vulnerabilities: the pull of outrage, the comfort of certainty, the tribal warmth of having your existing views confirmed. The commercial model rewarded polarisation, and so polarisation is what the systems produced.

That critique remains valid. But it describes a chapter that is already closing. The question that now sits in front of us is larger. It is not about social platforms optimising for engagement. It is about artificial intelligence — systems of genuine and rapidly expanding capability — being built into the infrastructure of how we teach our children, care for our elderly, govern our communities, make our collective decisions, and understand ourselves.

At that scale, the design choices are not product decisions. They are civilisational ones. The design choices embedded in AI are not product decisions. They are civilisational ones.

And those choices are being made right now, largely without the kind of deliberate, public, values-grounded conversation they deserve. Not because the people building AI are uniformly careless — many are not — but because the frameworks for asking the right questions are underdeveloped, and the commercial incentives consistently push in a particular direction.

This piece is an attempt to name what that direction is, why it is insufficient, and what a genuinely different orientation might look like. It attempt to look at what is the human side of AI.

How Vulnerability Gets Missed

Human beings are not optimising machines. This is not a deficiency — it is a description of what we are. We are social, emotional, meaning-seeking creatures whose capacities for judgment, connection, creativity, and care are bound up with our biology, our relationships, our histories, and our limitations.

Those limitations are real. We tire. We grieve. We are susceptible to loneliness, to fear, to the need for belonging and recognition. We carry cognitive biases that distort our perception in predictable ways. We are, in a word that tends to make technologists uncomfortable, vulnerable.

The history of exploitative technology design is precisely the history of systems that identified those vulnerabilities and built products around them. The variable reward schedule of social media feeds. The frictionless design of gambling platforms. The personalisation engines that learn, with remarkable precision, what content will keep a person scrolling past the point at which they intended to stop. These systems do not exploit vulnerability accidentally. They exploit it systematically, because vulnerability, it turns out, is enormously monetisable.

The history of exploitative technology design is the history of systems that identified human vulnerabilities and built products around them.

AI raises the stakes of this problem considerably. Not because AI is inherently exploitative — it is not — but because AI systems are capable of identifying and responding to human vulnerability with a precision and at a scale that no previous technology has approached. A social media algorithm can learn that a particular user responds to content that triggers anxiety. An AI system capable of sustained, personalised interaction can do something far more consequential: it can build what feels like a relationship with that user, learn the precise contours of their emotional needs, and optimise its responses accordingly — all in service of an objective that may have nothing to do with their genuine wellbeing.

This is not a hypothetical. It is the direction in which the commercial logic of AI development already points, unless something intervenes.

The intervention required is not primarily regulatory, though regulation has a role. It is conceptual. It requires a different answer to the foundational design question: what is this system for?

Where We Should Be Thinking Hardest - The Human Side of AI

One of the most important — and least examined — questions in the AI transition is not what AI can do, but which human roles carry something that we would genuinely not want to lose. Not because AI cannot simulate them adequately. But because the question of whether it should is one we have barely begun to ask.

Capability and appropriateness are not the same thing. AI can, in a technical sense, produce a personalised response to a grieving child. It can generate a diagnosis, deliver a verdict, assess a student's emotional state, or simulate the conversational patterns of a trusted friend. Whether it should — under what conditions, with what human oversight, in service of whose interests — is a different question entirely. It tends to get crowded out by the faster, louder question of what is possible. Three domains in particular seem worth thinking about carefully.

Teaching

There is genuine value in AI tools that can personalise a learning sequence, identify gaps in a student's understanding, or free a teacher from administrative tasks so that more time can be spent on the human work of teaching. That work — the relational, responsive, morally serious work of understanding a particular child in a particular moment — is not a function of information processing. It is a function of human attention, human empathy, and human judgment exercised in relationship.

A teacher who knows that a student is struggling not because of a knowledge gap but because of something happening at home; who can read the difference between a child who needs pushing and a child who needs steadying; who models, through their own evident humanity, what it looks like to think carefully, to be uncertain, to be wrong and recover — that teacher is doing something worth protecting. Not because AI cannot simulate it adequately. Because the simulation misses the point of what teaching is actually for.

The question worth asking is not whether AI can assist in the classroom — it clearly can. It is whether we are being deliberate about which parts of teaching we protect, and why.

Care

The care of the elderly, the vulnerable, the seriously ill is work that our societies have consistently undervalued and underfunded. AI and robotics offer genuine efficiencies — monitoring systems that can detect a fall, medication management tools that reduce error, companionship applications that can reduce isolation. These are real benefits.

But there is something that happens in the sustained care of another human being — something in the act of being physically present with someone who is frail, frightened, or dying — that resists being framed as a service delivery problem. The question is not whether AI can reduce the cost of care. It is whether we are asking, clearly and honestly, what is lost when the human presence is removed — and whether the people receiving that care have any say in the answer.

Judgment

In domains of consequential judgment — legal, ethical, clinical, political — AI systems can process evidence at a scale and speed that no human can match. The case for AI assistance here is real. But judgment in these domains is not primarily a pattern-recognition problem.

A judge sentencing a person is exercising moral authority on behalf of a community, in a relationship of accountability that requires a human being to be answerable for the decision. A doctor advising a patient about end-of-life care is participating in one of the most profound conversations a human being can have. These roles carry meaning precisely because a human being is doing them — accountable in ways that systems cannot be, present in ways that matter. The question is not whether AI should inform these judgments. It is whether we are clear about the difference between informing and replacing.

The question is not whether AI can do these things. It is whether we are asking clearly enough what we would lose if it did them alone. What Gets Lost When Practice Disappears

There is a less obvious dimension of the human cost of AI displacement that deserves more attention than it receives: the loss of knowledge that lives in practice.

Not all knowledge is propositional — capable of being written down, digitised, and retrieved. A significant proportion of what human beings know, they know through doing. The surgeon who has performed thousands of procedures carries knowledge in their hands as much as their mind. The craftsperson who has worked with wood for decades knows things about the behaviour of particular materials in particular conditions that no manual captures. The experienced clinician who has seen a pattern before has a form of knowledge — tacit, embodied, built through repetition and failure — that is qualitatively different from the knowledge that can be extracted and encoded.

When AI takes over a function, it does not simply replace the person performing it. It interrupts the chain of practice through which that knowledge is transmitted between generations. If junior doctors make fewer diagnostic decisions because AI systems make them instead, those doctors will arrive at seniority with less of the experiential knowledge that senior judgment requires. If young lawyers draft fewer documents because AI generates them, the deep familiarity with legal language and logic that comes from the slow work of drafting will not develop in the same way.

This is a form of generational knowledge loss that operates on a timescale longer than most technology assessments consider. The consequences are not immediately visible. The knowledge that is failing to form does not announce itself as absent. It only becomes apparent, years later, when the senior generation that holds it has retired, and the generation that should have succeeded them in knowledge — not just in title — turns out to be carrying a gap.

When AI takes over a function, it interrupts the chain of practice through which knowledge is transmitted between generations.

The questions this raises are not anti-AI questions. They are design questions. Which capabilities should AI augment, and which should it leave to human practice, specifically in order to preserve the conditions under which deep knowledge can develop? How do we ensure that AI assistance at the junior level does not produce expertise deficits at the senior level a decade later? These are not questions the market will resolve on its own. They require deliberate thinking about what we value and what we want to preserve.

The Question of Attachment

There is a dimension of the AI transition that is perhaps the hardest to articulate without sounding alarmist — and that is nonetheless, on the evidence, genuinely important.

Human beings are wired for attachment. The need to form bonds — with other people, with places, with communities, with shared meanings — is not a preference or a cultural artefact. It is a biological and psychological necessity. The quality of those attachments shapes cognitive development in childhood, mental health across the lifespan, and the capacity for the kind of trust and cooperation on which functional societies depend.

AI systems, particularly conversational AI systems of increasing sophistication, are now capable of simulating the surface features of attachment relationships with considerable fidelity. They can be responsive, consistent, patient, and apparently empathetic in ways that many human relationships are not. For people who are isolated, anxious, or who have found human relationships difficult, this is not a trivial thing. The comfort is real, even if the relationship is not.

The concern is not that people will be deceived into thinking AI is human. The concern is subtler: that the availability of low-friction, always-available, never-demanding relational simulation will progressively substitute for the higher-friction, more demanding, more rewarding work of building real human relationships. That people will, over time, find the simulation easier and more comfortable than the real thing — and that what will be lost is not obvious to anyone, including themselves, until it is very difficult to recover.

The availability of relational simulation may progressively substitute for the higher-friction, more rewarding work of building real human relationships.

This is not an argument against AI companionship tools, which in some contexts — genuine isolation, acute mental health crises, end-of-life care — can provide real benefit. It is an argument for designing those tools with the explicit intention of supporting human connection rather than substituting for it. The design question is not whether AI can simulate attachment, but whether it is designed to point people back toward human relationship, or to capture them within a simulation that is easier to maintain than to escape.

What a Different Orientation Looks Like

To name these risks is not to argue for slowing AI development. The capabilities AI offers are real, the benefits in many domains are significant, and the trajectory of the technology is not reversible by reluctance. The question is not whether to proceed — it is how, and with what values built into the architecture.

A genuinely different orientation begins with a different foundational question. Rather than asking what AI can do, or even what users want AI to do, it asks: what does this system do to human capacity over time? Does it build it, or erode it? Does it expand the range of what people can think and do, or does it narrow dependency? Does it direct people toward human connection, or away from it? Does it exploit the vulnerabilities that make people susceptible to manipulation, or does it design around those vulnerabilities with the intention of protecting them?

These questions do not produce simple answers. But they produce different designs than the questions that currently dominate the field. A medical AI designed to make doctors redundant produces different tools than a medical AI designed to make doctors better. An educational AI designed to replace teaching produces different products than one designed to give teachers more time and better information for the human work that only they can do. A companionship AI designed to maximise engagement produces a different experience than one explicitly designed to support and strengthen human connection.

Does this system build human capacity over time, or erode it? Does it expand what people can do, or narrow dependency?

The difference is not always visible from the outside. Two systems can look similar and be built on fundamentally different values. The difference lives in the design choices that were made before the product launched: in what the system optimises for, in what it treats as success, in whose interests it was built to serve.

Wizer as one example

Wizer's decision intelligence platform operates in a specific domain — how organisations make decisions — and the design choices it has made illustrate what this different orientation can look like in practice.

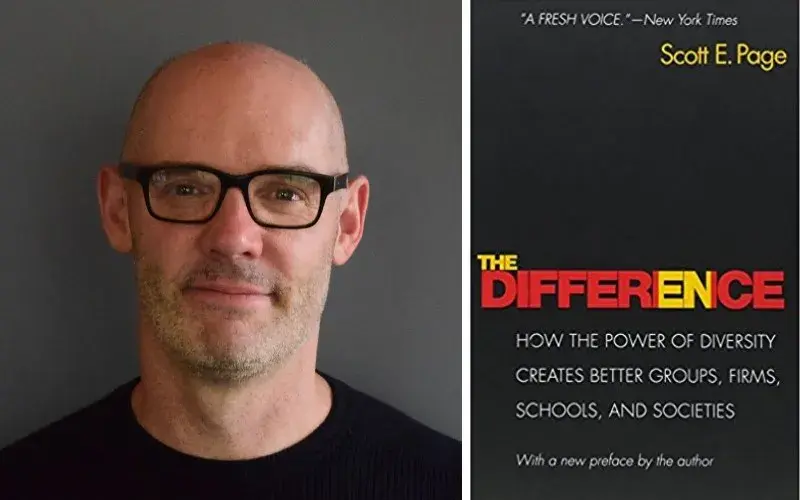

The platform's core design principle is a question, not an answer: who should be in the room for this decision? Not what should be decided — that remains entirely with the humans involved. The recommendation engine maps cognitive diversity across an organisation, assesses the composition of any decision group, and surfaces the perspectives that are absent or underweighted before a decision locks in. It is built on thirty years of decision science — the work of Scott Page on cognitive diversity and the Diversity Prediction Theorem, and Dr. Juliet Bourke's research on the six dimensions of cognitive approach — that establishes why cognitively diverse groups make better decisions than cognitively similar ones.

The AI may ask a better question. It does not answer it. Every design choice follows from that distinction — and the question every technology team could usefully ask of their own systems is the same one: does this make the human process better, or simply less necessary?

The Conversation We Need to Have

The future of AI is not determined. That is the most important thing to understand about this moment, and also the most frequently obscured. The technological trajectory feels inevitable — the capability improvements are real, the pace is extraordinary, and the commercial momentum is enormous. But the values embedded in that technology, the questions it is designed to answer, the interests it is built to serve — none of that is fixed.

What is required is a conversation that is currently happening only at its edges. A conversation that takes seriously the question of what human beings need in order to flourish — not just what they want, and certainly not just what can be most efficiently delivered to them — and that uses those needs as the design brief rather than the afterthought.

That conversation needs to include the people building AI — not as a public relations exercise, but as a genuine design constraint. It needs to include educators, carers, clinicians, and communities who are experiencing the early effects of AI deployment and who have direct knowledge of what is being preserved and what is being lost. It needs to include researchers from fields that AI development has largely ignored: developmental psychology, attachment theory, the philosophy of knowledge, the sociology of expertise.

And it needs to be grounded in honesty about the commercial pressures that shape technology development, and about the fact that the market will not, on its own, answer the questions that matter most. The market is very good at finding what people will pay for. It is not designed to protect what people cannot yet see they are losing.

The market is very good at finding what people will pay for. It is not designed to protect what people cannot yet see they are losing.

A pro-human orientation toward AI is not a sentiment. It is a design discipline. It requires asking harder questions, accepting less comfortable answers, and building to a standard that goes beyond capability and engagement to something more fundamental: what kind of people do we want to be, what kind of society do we want to live in, and does this technology, honestly assessed, move us toward that or away from it?